Kubernetes VPA: Limitations, Best Practices, and the Future of Pod Rightsizing

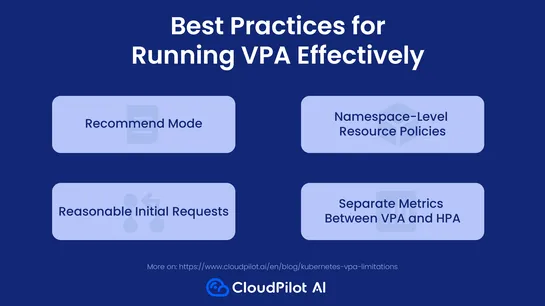

Kubernetes'Vertical Pod Autoscaler (VPA)tries to be helpful by tweaking CPU and memory requests on the fly. Problem is, it needs to bounce your pods to do it. And if you're also runningHorizontal Pod Autoscaler (HPA)on the same metrics? Now they're fighting over control. VPA sees a narrow slice of .. read more