Introducing Coregit

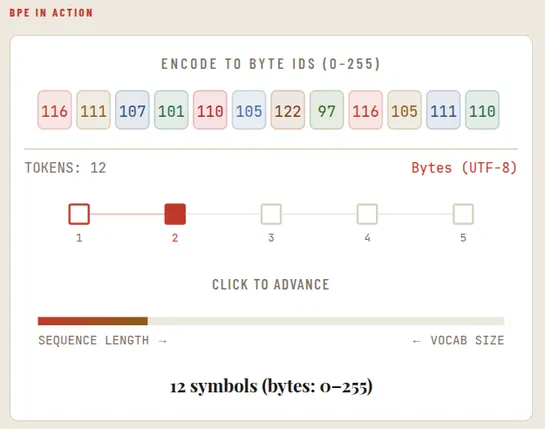

Coregit reimplements Git's object model inTypeScriptand runs onCloudflare Workersas a serverless edge Git API. Its commit endpoint accepts up to 1,000 file changes per request and replaces 105+ GitHub calls with one. Yes - one. It acknowledges writes inDurable Objects(~2ms), then flushes objects toR.. read more