PrismML unveils Ternary Bonsai: a family of 1.58-bit LMs in 1.7B, 4B, and 8B sizes. Models use ternary weights {-1,0,+1} with group-wise quantization.

Weights are ternary (-1,0,+1). Each group of 128 weights shares an FP16 scale. That cuts memory by ~9x versus 16-bit and boosts benchmark scores.

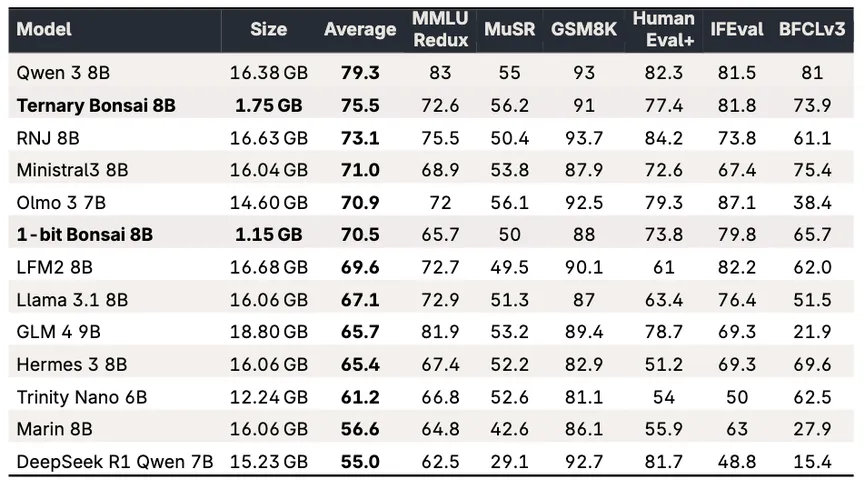

The 8B hits 75.5 avg at 1.75 GB. 82 toks/sec on M4 Pro. 27 toks/sec on iPhone 17 Pro Max. Ships under Apache 2.0.