Grafana Tempo: Setup, Configuration, and Best Practices

A practical guide to setting up Grafana Tempo, configuring key components, and understanding how to use tracing across your services.

Join us

A practical guide to setting up Grafana Tempo, configuring key components, and understanding how to use tracing across your services.

Hey, sign up or sign in to add a reaction to my post.

Just after Japan’s new Active Cyberdefence Law (ACD Law) came into effect — a major step toward reshaping the country’s cybersecurity posture — Japan’s largest brewer, Asahi Group, has suffered a ransomware attack that disrupted production and logistics nationwide. ⚠️ This incident starkly illustrat..

Hey, sign up or sign in to add a reaction to my post.

A developer rolled outMagicbrake- a no-fuss GUI forHandbrakeaimed at folks who don’t speak command line. One button. Drag, drop, convert. Done. It strips Handbrake down to the bones for anyone who just wants their video in a different format without decoding flags and presets... read more

Hey, sign up or sign in to add a reaction to my post.

uvis a new Rust-powered CLI from Astral that tosses Python versioning, virtualenvs, and dependency syncing into one blisteringly fast tool. It handles yourpyproject.tomllike a grown-up—auto-generates it, updates it, keeps your environments identical across machines. Need to run a tool once without t.. read more

Hey, sign up or sign in to add a reaction to my post.

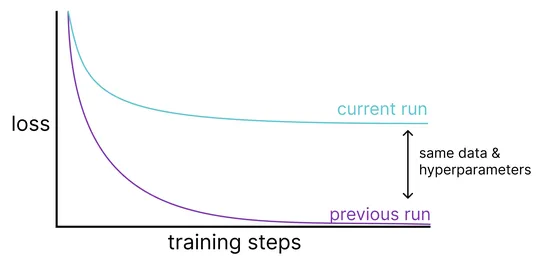

A sneaky bug inPyTorch’s MPS backendlet non-contiguous tensors silently ignore in-place ops likeaddcmul_. That’s optimizer-breaking stuff. The culprit? ThePlaceholder abstraction- meant to handle temp buffers under the hood - forgot to actually write results back to the original tensor... read more

Hey, sign up or sign in to add a reaction to my post.

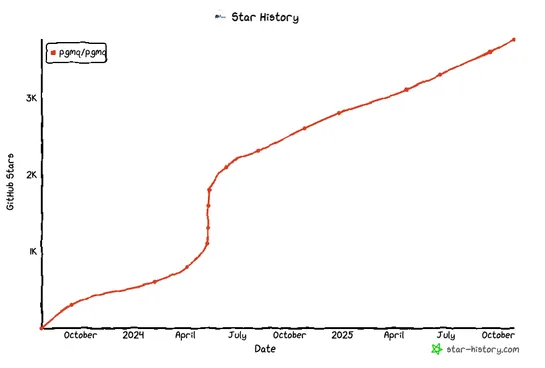

Postgres is pulling Kafka moves—without the Kafka. On a humble 3-node cluster, it held 5MB/s ingest and 25MB/s egress like a champ. Low latency. Rock-solid durability. Crank things up, andsingle-node Postgresflexed hard: 240 MiB/s in, 1.16 GiB/s out for pub/sub. Thousands of messages per second in q.. read more

Hey, sign up or sign in to add a reaction to my post.

Netflix gutted Tudum’s old read path—Kafka, Cassandra, layers of cache—and swapped inRAW Hollow, a compressed, distributed, in-memory object store baked right into each microservice. Result? Homepage renders dropped from 1.4s to 0.4s. Editors get near-instant previews. No more read caches. No extern.. read more

Hey, sign up or sign in to add a reaction to my post.

Bear Blog went dark after getting swarmed by scrapers. The reverse proxy choked first - too many requests, not enough heads-up. Downstream defenses didn’t catch it in time. So: fire, meet upgrades. What changed: Proxies scaled 5×. Upstream got strict with rate limits. Failover now has a pulse. Resta.. read more

Hey, sign up or sign in to add a reaction to my post.

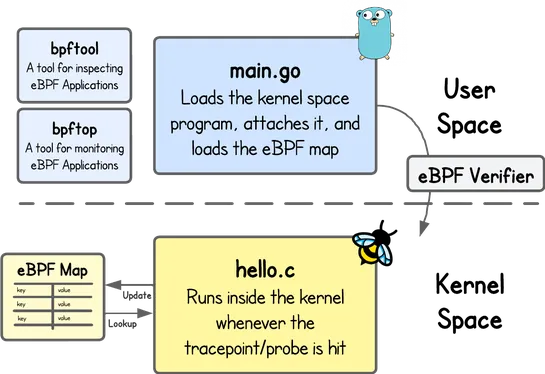

This hands-on path drops devs straight into writing, loading, and poking at basiceBPFprograms withlibbpf,maps, and those all-important kernel safety checks. It starts simple - with a beginner-friendly challenge - then dives deeper into theverifierand tools for runtime introspection... read more

Hey, sign up or sign in to add a reaction to my post.

Amazon EKS Auto Mode now runs the cluster for you—handling control plane updates, add-on management, and node rotation. It sticks to Kubernetes best practices so your apps stay up through node drains, pod failures, AZ outages, and rolling upgrades. It also respectsPod Disruption Budgets,Readiness Ga.. read more

Hey, sign up or sign in to add a reaction to my post.

Hey there! 👋

I created FAUN.dev(), an effortless, straightforward way for busy developers to keep up with the technologies they love 🚀