Parsing 1 Billion Rows in Bun/Typescript Under 10 Seconds

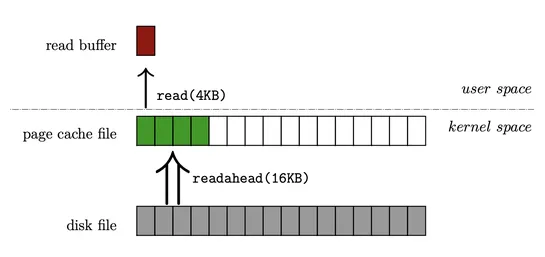

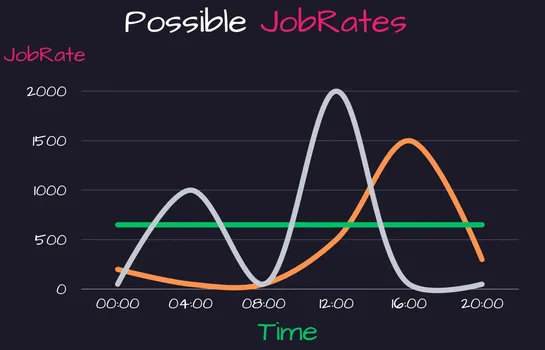

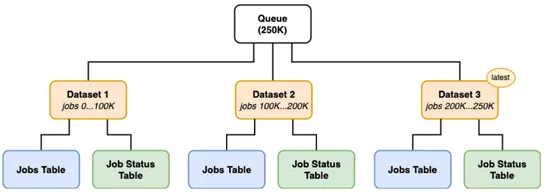

Buntries to swallow files over 4GB and promptly chokes. The culprit? ItsBuffercaps out at 4GB. The fix? Slice files into chunks under 4GB but keep the buffer lean, no more than 128KB, to keep things zippy. Pull out the big guns—workers. This move fires up all CPU cores, slashing processing time from.. read more