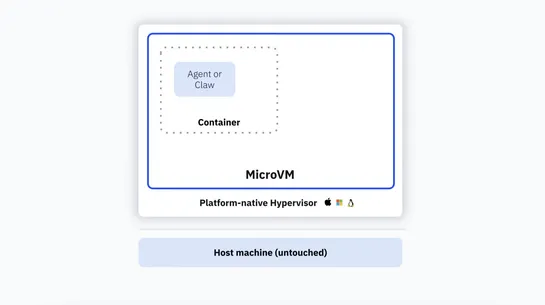

Why MicroVMs: The Architecture Behind Sandboxes

Docker Sandboxes puts each agent session in a dedicatedmicroVM. Each microVM runs a privateDocker daemoninside the VM boundary. That blocks access to the host. A new cross‑platformVMMruns on macOS, Windows, and Linux hypervisors. It slashes cold starts and runs fullDockerbuild, run, and compose work.. read more