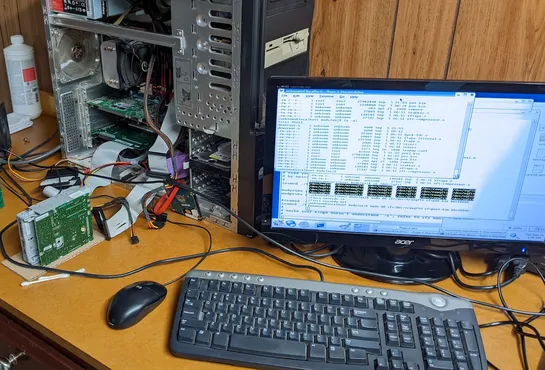

Using Claude Code to modernize a 25-year-old kernel driver

A long-dead Linux kernel driver for QIC-80 tape drives just got dragged into the present—with help from **Claude Code** and a lot of tinkering. It now builds cleanly and runs as a **standalone module** on **Linux 6.8**, playing nice with modern setups like **Xubuntu 24.04**. **The bigger picture:**.. read more