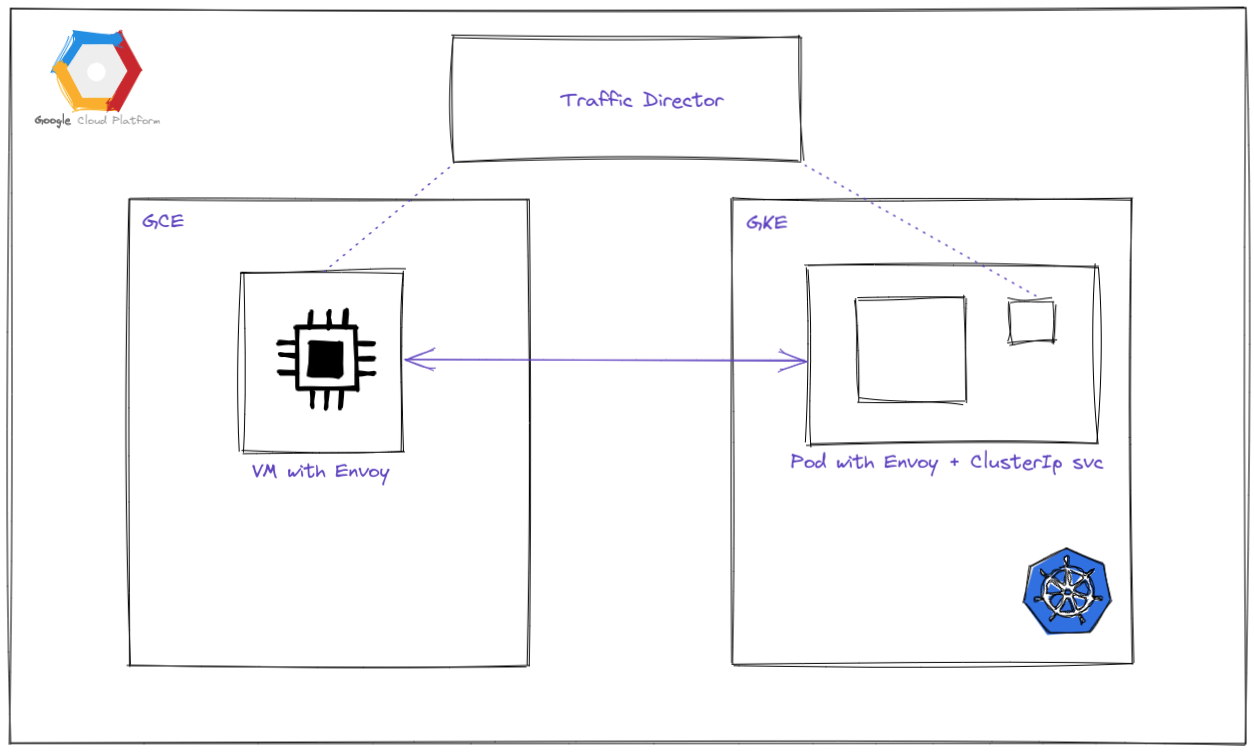

This snippet focus on exploring traffic director in GCP to manage communication between VM and pods in GKE through envoy proxy. Part 1 Focus in setting up with VM.

Background

Traffic Director

Traffic Director is a managed control plane for application networking. Which allow us the user to setup communication between services in a similar manner of ‘Service Mesh’ but managed by GCP and also with several additional features beyond service mesh such as:

- Multi Cluster Kubernetes

- Virtual Machine

- Proxyless gRPC

- Ingress and Gateways

- Multiple Environment

One of my interests is how we can establish communication between VM and Containers within Kubernetes which some of us may not have expertise in creating configuration or exposing services from Kubernetes to an external environment (inside VM). Within this story, I want to focus on how we can create communication between services in GCE as a Virtual Machine and in GKE as Pods.

Notes: The objective for this story is for a conceptual test, for production configuration please do check with the official documentation.

Test

To run the test of the traffic director which creates communication between VM and Pods I test on the following setup:

VM with Traffic Director

So the first setup is to deploy a VM template with Envoy installed and communicate with the Traffic Director. The simplest way to do that is to add options of:

--service-proxy=enabled

To setup such we can follow the documentation Traffic Director setup for Compute Engine VMs with automatic Envoy deployment

[One] Create a VM template which I am using as an example of a simple VM for LoadBalancer test and enable the service proxy.

This is only an example of my generated gcloud command for other purposes but adding the service proxy option at the end. You may change and create your own configuration.

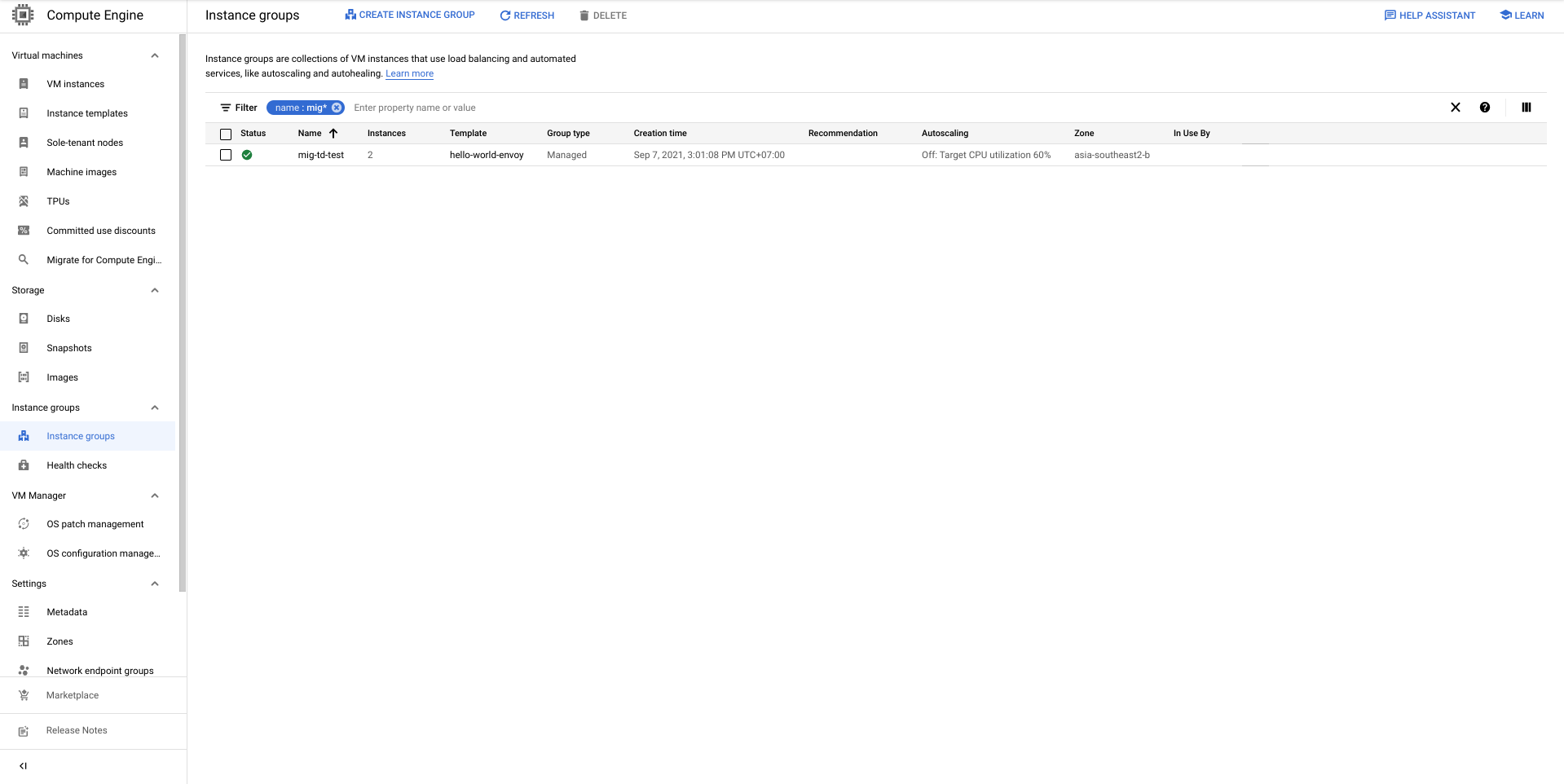

[Two] Create a Managed Instance Group using the created template. I set off the autoscaler for simplicity and set the qty to 2. Go to Compute Engine > Instance Groups > Create Instance Group.

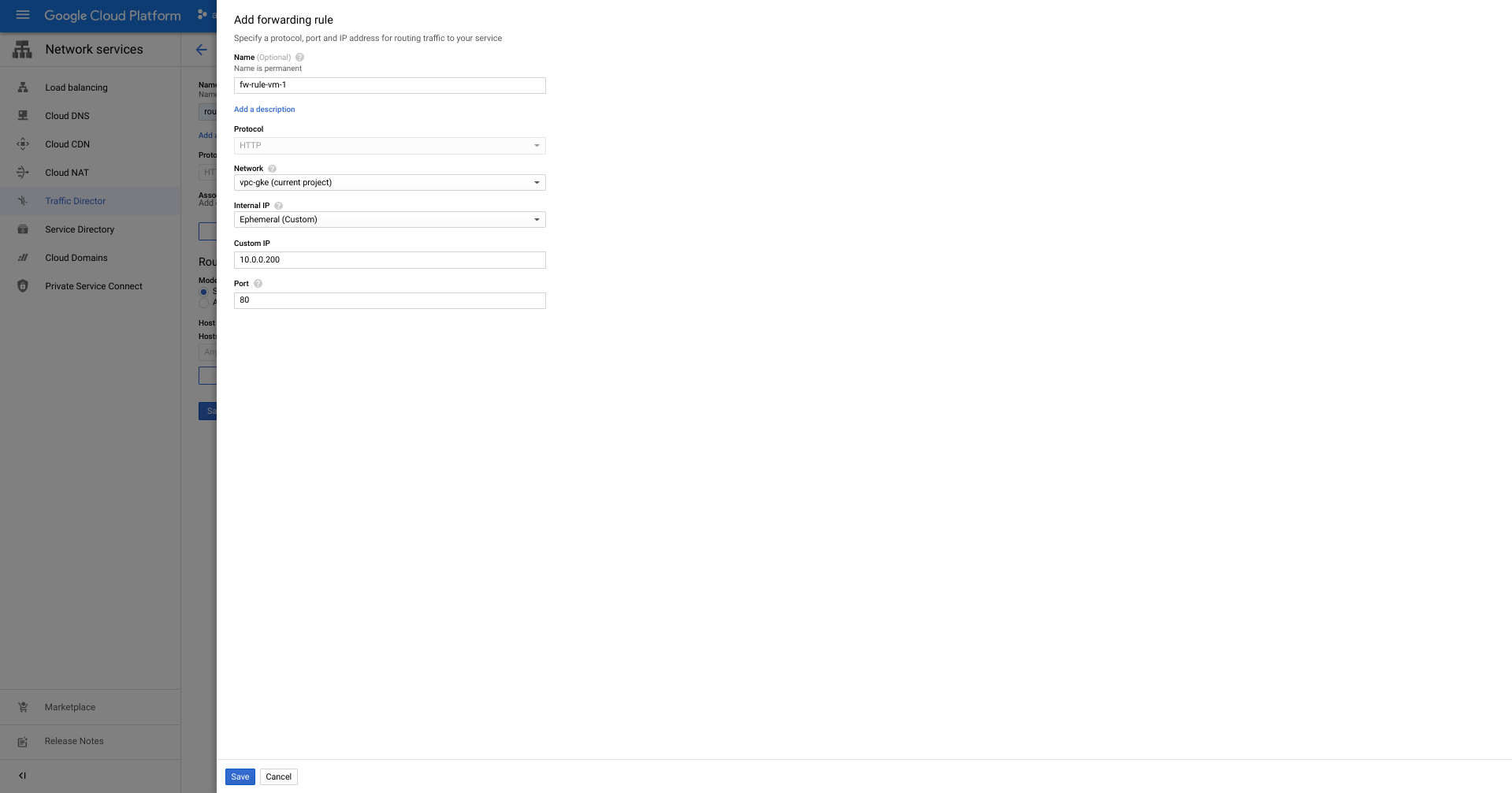

[Three] Create Traffic Director Service and configure the Routing Rule Maps. Go to Network Services > Traffic Director > Create Service. (Ensure that we already enable the Traffic Director API or please go and enable it prior to continuing the steps).

Configure the service adding the name + network (VPC) + select the backend type of Instance Group and add the MIG that we have created earlier. Add the health check and ensure that the firewall is not blocking the health check ( I already configured it; if you are wondering the steps to do that please go to the documentation). Then press continue

Now we need to attached routing rule maps by click the Routing Rule Maps tab > Create Routing Rule Map.

And now we test to call the ip of the forwarding rule

We can see that the VM is able to call the traffic director and the traffic director will load balance to the VMs inside the MIG. Let’s continue our test with creating the GKE cluster and set up pods with envoy to communicate later on. This will be continued on the next post (Part 2)

Start blogging about your favorite technologies, reach more readers and earn rewards!

Join other developers and claim your FAUN account now!

Johanes Glenn

Customer Engineer Infra/App Mod, Google

@alevzUser Popularity

119

Influence

12k

Total Hits

2

Posts

Only registered users can post comments. Please, login or signup.