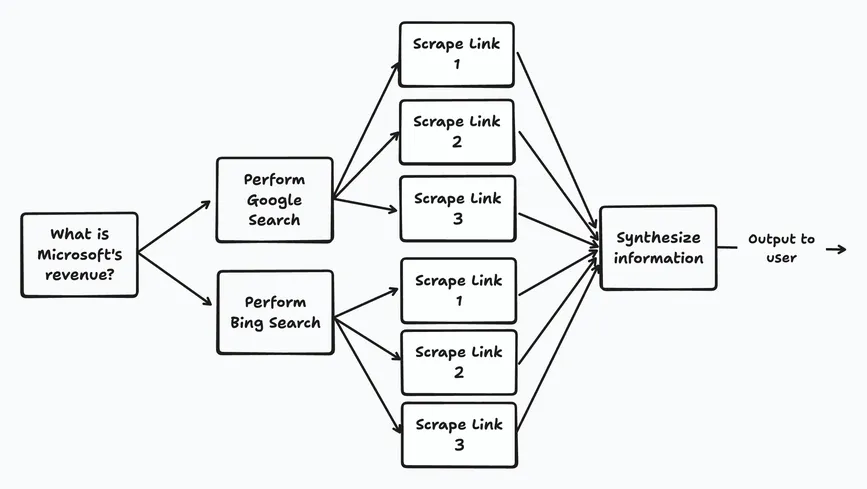

The note says AI workloads are bursty. They spawn parallel tool calls, pull multi‑GB model weights into RAM, and endure long cold starts (e.g., vLLM, SGLang). Companies wrestle with a fragmented GPU market and poor peak GPU utilization. To hit latency, compliance, and cost targets they adopt multi‑region/multi‑cloud setups or partner with serverless compute.

Start writing about what excites you in tech — connect with developers, grow your voice, and get rewarded.

Join other developers and claim your FAUN.dev() account now!

DevOpsLinks #DevOps

FAUN.dev()

@devopslinksDevOps Weekly Newsletter, DevOpsLinks. Curated DevOps news, tutorials, tools and more!

Developer Influence

9

Influence

1

Total Hits

137

Posts