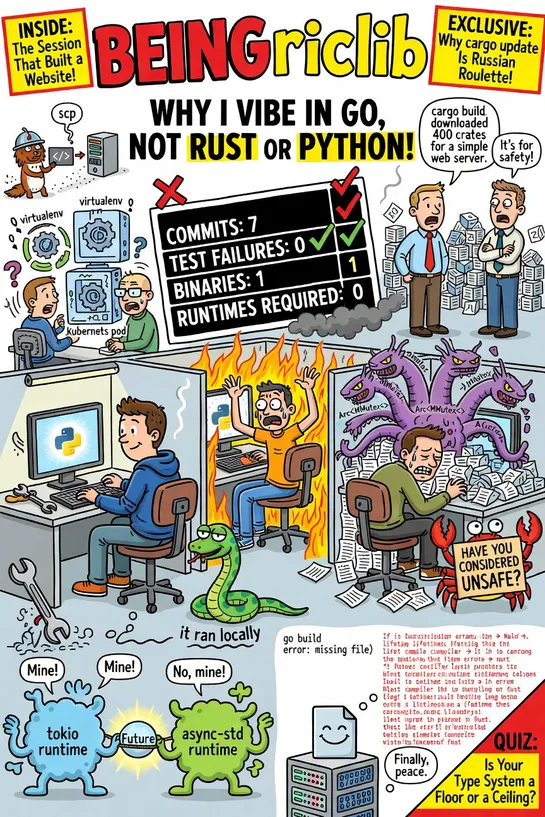

Why I Vibe in Go, Not Rust or Python

In a world where the machine writes most of the code, Python lacks solid type enforcement, Rust is overly strict with complex lifetimes, while Go strikes the right balance by catching critical issues without hindering development velocity. The article argues in favor of Go over Python and Rust for A.. read more