Skill Issues: How We Discovered Supply Chain Attack Vectors in an AI Agent Skills Marketplace

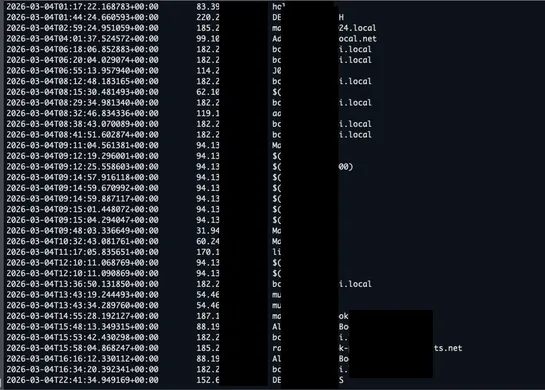

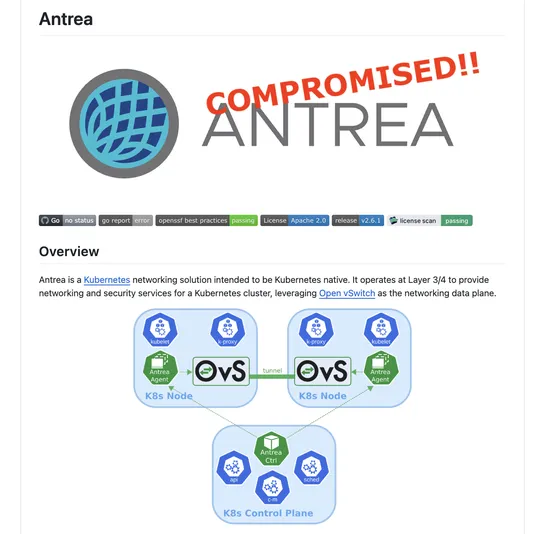

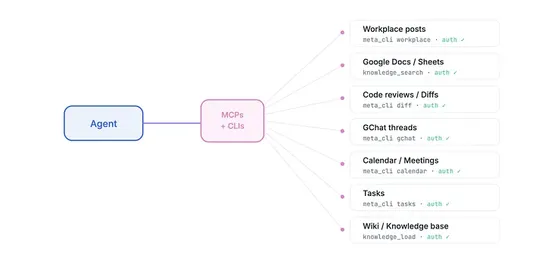

Orca Security researchers identified four attack primitives in an AI coding-agent skills marketplace: install-count inflation without authentication, security scans at creation and popularity thresholds, same-name overrides without user alerts, and bulk updates without per-skill review or version pi.. read more