We Might All Be AI Engineers Now

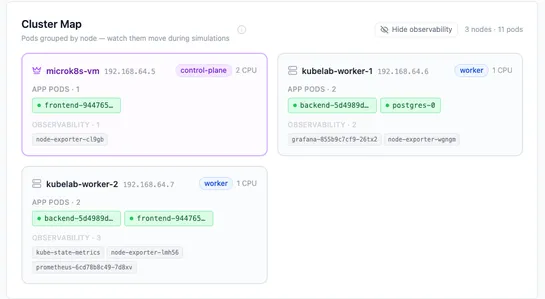

The author supervises AI agents that orchestrate concurrent graph traversal, multi-layer hashing, AST parsing, and file system watchers. The agents run traversal, hashing, and watcher loops. The engineer architects system behavior, verifies outputs, and probes agents in parallel to debug... read more