How Netflix optimized its petabyte-scale logging system with

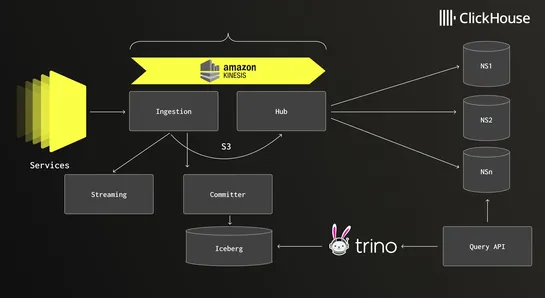

Netflix overhauled its logging pipeline to chew through5 PB/day. The stack now leans onClickHousefor speed andApache Icebergto keep storage costs sane. Out went regex fingerprinting - slow and clumsy. In came aJFlex-generated lexerthat actually keeps up. They also ditched generic serialization in fa.. read more