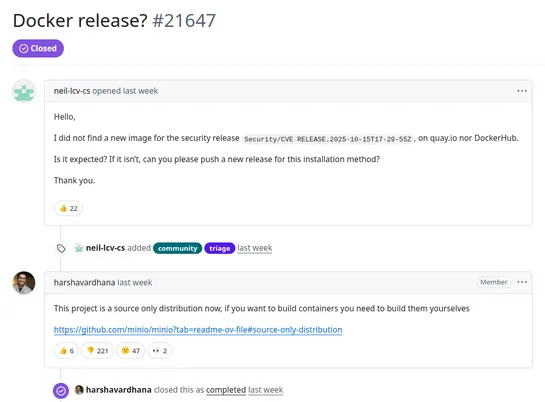

MinIO Pulls Docker Images and Documentation - Community Calls Move "Malicious" and "Lock-In Strategy"

MinIO transitions to a source-only distribution model, ending pre-compiled Docker images, sparking community concerns over security and feature removal.